|

It is increasingly common to use multiple cloud services as building blocks to assemble a modern event-driven application. Using purpose-built services to accomplish a particular task ensures developers get the best capabilities for their use case. However, communication between services can be difficult if they use different technologies to communicate, meaning that you need to learn the nuances of each service and how to integrate them with each other. We usually need to create integration code (or “glue” code) to connect and bridge communication between services. Writing glue code slows our velocity, increases the risk of bugs, and means we spend our time writing undifferentiated code rather than building better experiences for our customers.

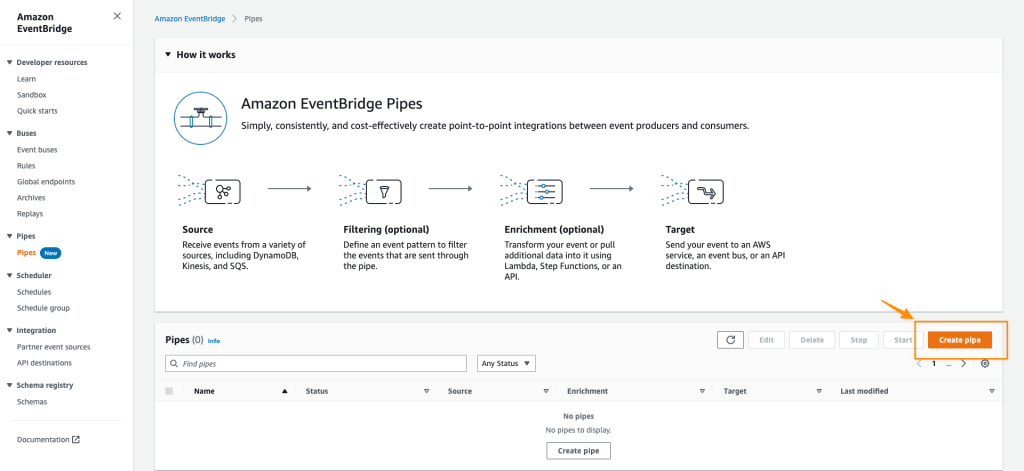

Introducing Amazon EventBridge Pipes

Today, I’m excited to announce Amazon EventBridge Pipes, a new feature of Amazon EventBridge that makes it easier for you to build event-driven applications by providing a simple, consistent, and cost-effective way to create point-to-point integrations between event producers and consumers, removing the need to write undifferentiated glue code.

The simplest pipe consists of a source and a target. An optional filtering step allows only specific source events to flow into the Pipe and an optional enrichment step using AWS Lambda, AWS Step Functions, Amazon EventBridge API Destinations, or Amazon API Gateway enriches or transforms events before they reach the target. With Amazon EventBridge Pipes, you can integrate supported AWS and self-managed services as event producers and event consumers into your application in a simple, reliable, consistent and cost-effective way.

Amazon EventBridge Pipes bring the most popular features of Amazon EventBridge Event Bus, such as event filtering, integration with more than 14 AWS services, and automatic delivery retries.

How Amazon EventBridge Pipes Works

Amazon EventBridge Pipes provides you a seamless means of integrating supported AWS and self-managed services, favouring configuration over code. To start integrating services with EventBridge Pipes, you need to take the following steps:

- Choose a source that is producing your events. Supported sources include: Amazon DynamoDB, Amazon Kinesis Data Streams, Amazon SQS, Amazon Managed Streaming for Apache Kafka, and Amazon MQ (both ActiveMQ and RabbitMQ).

- (Optional) Specify an event filter to only process events that match your filter (you’re not charged for events that are filtered out).

- (Optional) Transform and enrich your events using built-in free transformations, or AWS Lambda, AWS Step Functions, Amazon API Gateway, or EventBridge API Destinations to perform more advanced transformations and enrichments.

- Choose a target destination from more than 14 AWS services, including Amazon Step Functions, Kinesis Data Streams, AWS Lambda, and third-party APIs using EventBridge API destinations.

Amazon EventBridge Pipes provides simplicity to accelerate development velocity by reducing the time needed to learn the services and write integration code, to get reliable and consistent integration.

EventBridge Pipes also comes with additional features that can help in building event-driven applications. For example, with event filtering, Pipes helps event-driven applications become more cost-effective by only processing the events of interest.

Get Started with Amazon EventBridge Pipes

Let’s see how to get started with Amazon EventBridge Pipes. In this post, I will show how to integrate an Amazon SQS queue with AWS Step Functions using Amazon EventBridge Pipes.

The following screenshot is my existing Amazon SQS queue and AWS Step Functions state machine. In my case, I need to run the state machine for every event in the queue. To do so, I need to connect my SQS queue and Step Functions state machine with EventBridge Pipes.

First, I open the Amazon EventBridge console. In the navigation section, I select Pipes. Then I select Create pipe.

On this page, I can start configuring a pipe and set the AWS Identity and Access Management (IAM) permission, and I can navigate to the Pipe settings tab.

In the Permissions section, I can define a new IAM role for this pipe or use an existing role. To improve developer experience, the EventBridge Pipes console will figure out the IAM role for me, so I don’t need to manually configure required permissions and let EventBridge Pipes configures least-privilege permissions for IAM role. Since this is my first time creating a pipe, I select Create a new role for this specific resource.

Then, I go back to the Build pipe section. On this page, I can see the available event sources supported by EventBridge Pipes.

I select SQS and select my existing SQS queue. If I need to do batch processing, I can select Additional settings to start defining Batch size and Batch window. Then, I select Next.

On the next page, things get even more interesting because I can define Event filtering from the event source that I just selected. This step is optional, but the event filtering feature makes it easy for me to process events that only need to be processed by my event-driven application. In addition, this event filtering feature also helps me to be more cost-effective, as this pipe won’t process unnecessary events. For example, if I use Step Functions as the target, the event filtering will only execute events that match the filter.

I can use sample events from AWS events or define custom events. For example, I want to process events for returned purchased items with a value of 100 or more. The following is the sample event in JSON format:

{ “event-type”:”RETURN_PURCHASE”, “value”:100 }

Then, in the event pattern section, I can define the pattern by referring to the Content filtering in Amazon EventBridge event patterns documentation. I define the event pattern as follows:

{ “event-type”: [“RETURN_PURCHASE”], “value”: [{ “numeric”: [“>=”, 100] }] }

I can also test by selecting test pattern to make sure this event pattern will match the custom event I’m going to use. Once I’m confident that this is the event pattern that I want, I select Next.

In the next optional step, I can use an Enrichment that will augment, transform, or expand the event before sending the event to the target destination. This enrichment is useful when I need to enrich the event using an existing AWS Lambda function, or external SaaS API using the Destination API. Additionally, I can shape the event using the Enrichment Input Transformer.

The final step is to define a target for processing the events delivered by this pipe.

Here, I can select various AWS services supported by EventBridge Pipes.

I select my existing AWS Step Functions state machine, named pipes-statemachine.

In addition, I can also use Target Input Transformer by referring to the Transforming Amazon EventBridge target input documentation. For my case, I need to define a high priority for events going into this target. To do that, I define a sample custom event in Sample events/Event Payload and add the priority: HIGH in the Transformer section. Then in the Output section, I can see the final event to be passed to the target destination service. Then, I select Create pipe.

In less than a minute, my pipe was successfully created.

To test this pipe, I need to put an event into the Amaon SQS queue.

To check if my event is successfully processed by Step Functions, I can look into my state machine in Step Functions. On this page, I see my event is successfully processed.

I can also go to Amazon CloudWatch Logs to get more detailed logs.

Things to Know

Event Sources – At launch, Amazon EventBridge Pipes supports the following services as event sources: Amazon DynamoDB, Amazon Kinesis, Amazon Managed Streaming for Apache Kafka (Amazon MSK) alongside self-managed Apache Kafka, Amazon SQS (standard and FIFO), and Amazon MQ (both for ActiveMQ and RabbitMQ).

Event Targets – Amazon EventBridge Pipes supports 15 Amazon EventBridge targets, including AWS Lambda, Amazon API Gateway, Amazon SNS, Amazon SQS, and AWS Step Functions. To deliver events to any HTTPS endpoint, developers can use API destinations as the target.

Event Ordering – EventBridge Pipes maintains the ordering of events received from an event sources that support ordering when sending those events to a destination service.

Programmatic Access – You can also interact with Amazon EventBridge Pipes and create a pipe using AWS Command Line Interface (CLI), AWS CloudFormation, and AWS Cloud Development Kit (AWS CDK).

Independent Usage – EventBridge Pipes can be used separately from Amazon EventBridge bus and Amazon EventBridge Scheduler. This flexibility helps developers to define source events from supported AWS and self-managed services as event sources without Amazon EventBridge Event Bus.

Availability – Amazon EventBridge Pipes is now generally available in all AWS commercial Regions, with the exception of Asia Pacific (Hyderabad) and Europe (Zurich).

Visit the Amazon EventBridge Pipes page to learn more about this feature and understand the pricing. You can also visit the documentation page to learn more about how to get started.

Happy building!

— Donnie